- Experiment - An experiment is a defined set of operations that produce a probability

result.

- Outcome - An outcome is any one possible result of an experiment. In set theory, this

would be an element.

Notation: oi

- Event - An event is a set of one or more outcomes.

Notation: Ei

- Sample Space - The sample space is the set of all possible outcomes.

Notation: S

- Empty Event - An empty event is an event with no outcomes in it.

Notation: {} or ∅

- Trial - A trial is one performance of the experiment.

Notation: Ti

- Stage - A stage is one of the operations performed that make up an experiment.

Notation: Si

- Complement of an Event - The complement of an event is the set of all outcomes that are in

the sample space, but are not the event.

Notation: ~ E1 or E1'

Let S =

{o1,o2,o3,o4,o5}

Let E1 = {o1,o2,o3}

Then E1' = {o4,o5}

- Intersection of Events - The intersection of two events is the set of all outcomes that are

members of both events.

Notation: E1 ∩ E2

Let E1 = {o1,o3,o5}

Let E2 = {o1,o2,o3}

Then E1 ∩ E2 = {o1,o3}

- Union of Events - The union of two events is the set of all outcomes that are members of

either or both events.

Notation: E1 ∪ E2

Let E1 = {o1,o3,o5}

Let E2 = {o1,o2,o3}

Then E1 ∪ E2 =

{o1,o2,o3,o5}

- Independent Events - Independent events do not influence each other.

- Disjoint (Mutually Exclusive) Events - Disjoint (or mutually exclusive) events have

no common outcomes.

Notation: E1 ∩ E2 = ∅

Let E1 = {o1,o2,o3}

Let E2 = {o4,o5}

Then E1 ∩ E2 = {} = ∅

- Dependent Events - Dependent events are events that change probability when other events

occur. Disjoint events are dependent, because they can never occur on the same trial.

- Pairwise Disjoint Events - Multiple events where each outcome is an element of no more

than one event.

Let E1 = {o1,o2}

Let E2 = {o4,o5}

Let E3 = {o3}

Then E1 and E2 and E3 are pairwise disjoint.

- Partition of the Sample Space - Pairwise disjoint events whose union forms the sample space

are a partition of the sample space.

Let S =

{o1,o2,o3,o4,o5}

Let E1 = {o1,o2}

Let E2 = {o4,o5}

Let E3 = {o3}

Then E1 and E2 and E3 form a partition of sample space

S.

Properties of a Partition of the Sample Space:

All of the events are pairwise disjoint.

The union of all of the events equals the sample space itself.

Each outcome in the sample space is an element of exactly one of the events.

- Types of data:

| Categorical | Numerical |

Values that cannot be measured using measuring tools

The color, shape, or other discrete property of a sampled item

A yes-no, multiple choice, or integer outcome of a random event

(e.g. a rolled die, playing card, genetic trait, or drawn ball)

|

Values that can be measured using instruments

Length, volume, mass, or other measured value of sampled items

Any other value that can be measured with instruments

(e.g. temperature, density, pressure, or other physical property)

|

| |

| Count | Portion |

Number of occurrences of values that cannot be measured

How many instances have the color, shape, or other property

A non-negative integer number of outcomes of a random event

(e.g. number of heads, number with the trait, or ball count)

|

The portion of a given value as a fraction of that value

Probabilities are portions of certainty - Fractions of wholes

Restricted to these values: 0 ≤ p ≤ 1

(e.g. probability, 1/2 full, or part of any measured value)

|

- Replacement of Objects or Outcomes:

| Replacement | No Replacement |

Objects or outcomes are put back before the next drawing.

An infinite supply of objects is available.

Outcomes are part of a permanent random device.

(e.g. a flipped coin, a rolled die, or a spinner)

|

Objects or outcomes become unavailable or disallowed once used.

Race and tournament finishing orders

Objects are kept or discarded.

(e.g. a dealt hand of cards, books on a shelf, or a committee)

|

- The Order of Objects or Outcomes:

| Order Matters | No Order |

Outcomes are noted or arranged as they are picked.

Race and tournament finishing orders.

Outcomes are used for different purposes.

(e.g. president, vice president, secretary)

|

Objects or outcomes are chosen simultaneously.

Rearranging objects does not change the outcome.

Objects are dumped into a holder.

(e.g. a hand of cards, colored checkers, or a committee)

|

Note that counts are not numerical data.

The following are methods of counting the numbers of items and outcomes in everyday life:

- The Number of an Event - The number of an event is the number of outcomes in it.

Notation: n(E1)

Let E1 = {o1,o3,o5}

Then n(E1) = 3}

Note: N(∅) = 0

- Event Addition Rule: - The following formula finds the number of the union of two events:

Notation:

n(E1 ∪ E2) = n(E1) + n(E2) −

n(E1 ∩ E2)

Let S =

{o1,o2,o3,o4,o5}

Let E1 = {o1,o3,o5}

Let E2 = {o1,o2,o3}

So E1 ∩ E2 = {o1,o3}

Then n(E1 ∪ E2) = n(E1) + n(E2) −

n(E1 ∩ E2) = 3 + 3 − 2 = 4

- Disjoint Event Addition Rule: - The following formula finds the number of the union of two

disjoint events:

Notation:

n(E1 ∪ E2) = n(E1) + n(E2)

Must be disjoint!

Let S =

{o1,o2,o3,o4,o5}

Let E1 = {o1,o2,o3}

Let E2 = {o4,o5}

So E1 ∩ E2 = ∅

Then n(E1 ∪ E2) = n(E1) + n(E2)

= 3 + 2 = 5

- The number of the Complement of an Event - The following formula finds the number of the

complement of an event:

Notation: n(E1') = n(S) − n(E1)

Let S =

{o1,o2,o3,o4,o5}

Let E1 = {o1,o2,o3}

Then n(E1') = n(S) − n(E1) = 5 − 3 = 2

- The Multiplication Principle - When successive stages of an experiment are independent

(do not influence each other), the number of outcomes of the experiment is the product of the

numbers of outcomes of each of the stages.

Let n(S1) = 4

Let n(S2) = 3

Let n(S3) = 5

Then n(S) = 4 • 3 • 5 = 60

- The Power Principle - When successive stages of an experiment are identical and do not

influence each other, the number of outcomes of the experiment is the number of outcomes of

one stage raised to the power of the number of stages.

Let n(S1) = n(S2) = n(S3) = 5

Then n(S) = 53 = 125

This works when drawn objects are replaced, and the order of the outcomes matters.

Where there are n objects to choose from, and r choices are made with replacement, and

order matters, the number of outcomes is nr

- The Factorial Principle - The number of ways to arrange n distinct objects in a definite

order without replacement is the nth factorial, written n!

The nth factorial is the product of all of the integers from 1 to n.

Let n = 5.

Then n! = 5! - 5 • 4 • 3 • 2 • 1 = 120

This works when drawn objects are not replaced, the order of the outcomes matters, and all

of the objects are taken.

- The Anagram Principle - The number of ways to arrange n objects composed of g groups of

ri identical objects in each group is the number of anagrams of n items of g kinds

with counts of each kind r1, r2, ... rg,

written A(n,r1,r2, ... rg).

The sum of all of the ri values must equal n.

| Then A(n,r1,r2,...,rg) = |

n! |

| r1! r2! ... rg! |

Example: Let n = 5

Let g = 3

Let r1 = 2

Let r2 = 1

Let r3 = 2

| Then A(n,r1,r2,r3) = |

n! |

= |

5! |

= 30 |

| r1! r2! r3! |

2! 1! 2! |

This works when drawn objects are not replaced, the order of the objects matters, some

objects are indistinguishable from others, and all of the objects are taken.

- The Permutation Principle - The number of ways to arrange r objects picked from a pool of

n distinct objects, in a definite order with no replacement is the number of permutations of

n items, picked r at a time, written P(n,r).

Let n = 5

Let r = 3

| Then P(n,r) = |

n! |

= |

5! |

= 60 |

| (n−r)! |

(5−3)! |

This works when drawn objects are not replaced, and the order of the outcomes matters.

- The Combination Principle - The number of ways to choose a group of r objects from a pool of

n distinct objects, with no order and no replacement, is the number of combinations of

n items, chosen r at a time, written C(n,r).

Let n = 5

Let r = 3

| Then C(n,r) = |

n! |

= |

5! |

= 10 |

| r! (n−r)! |

3! (5−3)! |

This works when drawn objects are not replaced, and the order of the outcomes does not matter.

- The Recombination Principle - The number of ways to arrange

r objects reused from a pool of n distinct objects, with replacement and no order, is the number

of recombinations of n items, received r at a time, written R(n,r).

Let n = 5 Let r = 3

| Then R(n,r) = |

(n+r−1)! |

= C(n+r−1,r) = |

7! |

= 35 |

| r! (n−1)! |

3! (5-1)! |

This works when drawn objects are replaced, and the order of the outcomes does not

matter.

NOTE: This is rare in probability. It is included for studying unusual counting

problems that do occur in daily life. Special methods

(discussed later) are needed to obtain equally likely outcomes

in this kind of experiment.

The following are probability measures that occur in everyday life.

- Probability - Probability is the fraction of the trials that an event is expected to occur.

Probability has the following properties:

- A probability is a fraction in the range of values from 0 to 1.

- If the probability of an event is 0, the event never occurs.

- If the probability of an event is 1, the event always occurs.

- Weight - The probability of an outcome is its weight.

Notation: wi = Pr(oi)

- Odds - Odds are an obsolete measure of probability in the form S:F.

- S is a number, usually an integer, representing the expected number of successes in S+F

trials.

- F is a number on the same scale representing the expected number of failures in S+F

trials.

Example: If the probability of an event is 2/3, then the odds are 2:1

(read "2 to 1") in favor of the event.

Often an event of low probability is represented as "F:S against"

(e.g. Pr(A) = 1/3 gives odds of 2:1 against A).

It is not nearly as useful as probability.

- Sample Frequency Method - The sample frequency method consists of repeating the experiment

a large number of times and recording the number of occurrences of each outcome to find their

probabilities.

- Analytical Method - The analytical method consists of the use of mathematical calculations

to find the probability of each outcome in an experiment.

- Probability of an Event - The probability of an event is the sum of the probability of its

outcomes:

Let Ei = {oj,oj+1, ... ,ok}

Then Pr(Ei) = wj + wj+1 + ... + wk

- Equally Likely Outcomes - When all of the outcomes are equally likely, the following

formulas apply:

wi = 1 / n(S)

Pr(Ei) = n(Ei) / n(S)

- Sample Space Probability - Since the sample space contains all of the outcomes in the

experiment, the probability of the sample space is 1.

Notation: Pr(S) = 1

- Empty Event Probability - Since the empty event contains none of the outcomes in the

experiment, the probability of the empty event is 0.

Notation: Pr(∅) = 0

- The Value of an Outcome - The value of an outcome is how much the outcome is worth to

someone performing or observing the experiment.

- Probability of the Complement of an Event - The probability of the complement of the

event E is:

Pr(E') = 1 - Pr(E)

- Event Probability Addition Rule - For any events E and F:

Pr(E ∪ F) = Pr(E) + Pr(F) - Pr(E ∩ F)

Note that, since E ∩ F occurs twice, once in E, and also once in F, one of them must

be subtracted out.

- Mutually Exclusive Event Probability Addition Rule - For any disjoint events E and F:

Pr(E ∪ F) = Pr(E) + Pr(F)

Note that, since E ∩ F is empty in disjoint events, its probability is 0, and it does

not have to be subtracted out.

- Independent Event Probability Multiplication Rule - When events are independent:

Pr(E ∩ F) = Pr(E) • Pr(F)

- Conditional Probability - The conditional probability of the event A, given that event B

is known to have occurred, is Pr(A|B)

Pr(A|B) = Pr(A ∩ B) / Pr(B)

The probability of the event A, given that B has occurred, is the probability that both

events occurred, divided by the probability that event B occurred.

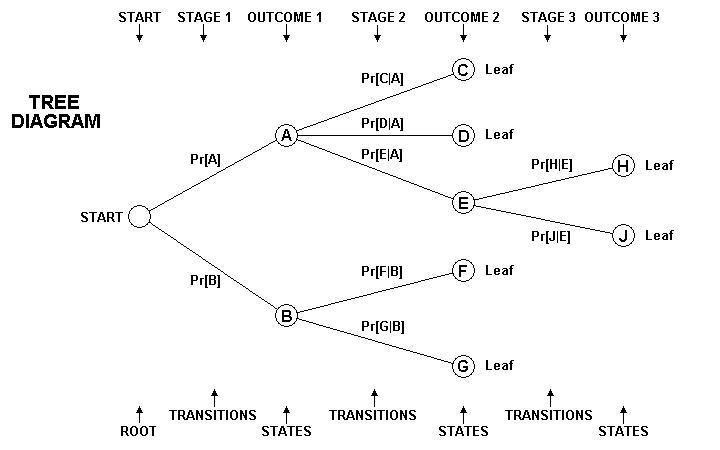

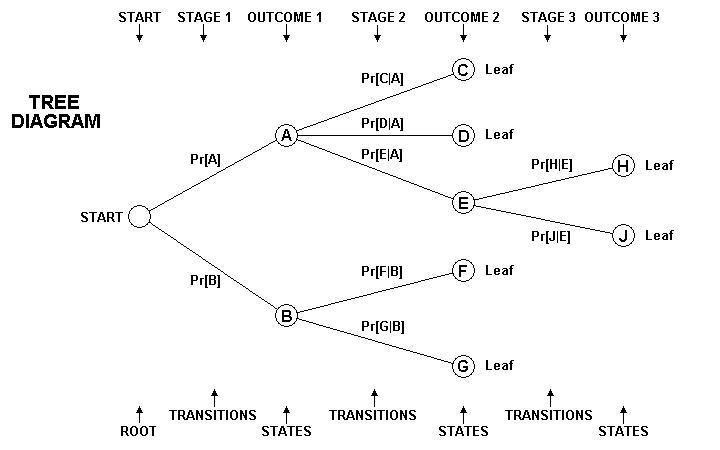

- Stochastic Process - An experiment that is made up of multiple stages is called a

stochastic process.

- The Parts of a Probability Tree:

- Root - The starting point of the tree is the root.

- Node - Any stopping point on a tree is a node, represented by a circle.

- State - The node at the current point in the performance experiment is the state.

- Branch - A portion of a tree connecting two nodes is a branch, represented by a

line.

- Branch Probability - A branch probability is the conditional probability of following a

particular branch, represented by a weight placed by the line representing the branch.

- Leaf - A leaf is an ending node, representing a final outcome of an experiment. The

experiment ends when it reaches a leaf.

- Leaf Probability - The leaf probability of a leaf is the probability of reaching that

leaf.

- Stage - A stage is any of the several sequential acts that make up the experiment.

- Stage Outcome - The result of performing a stage is the stage outcome.

- Transition - A transition is the performance of a stage, resulting in a stage outcome,

and changing the state of the experiment.

- The number of Outcomes on a Tree - The number of outcomes on a tree is found by counting

the leaf nodes.

- Use of the tree to represent an experiment:

- Place the root at the left, and add each stage to the right of the previous stage.

- Use a branching of lines to represent each stage in the experiment. Place a branch

probability (if known) on each branch.

- Use a column of nodes (circles) to represent the possible outcomes of that stage.

- Leave enough space for the entire tree. It can become rather tall.

-

- A sample experiment:

- Urn A contains cards C, D, and E.

- Urn B contains cards F and G.

- Card E has an H printed on one side and a J on the other side.

- Stage 1: Select either urn A or urn B.

- Stage 2: Draw a card from the selected urn.

- Stage 3: If card E is drawn, flip the card in the air and note which side comes up.

- The Probability of a Particular Leaf - The probability of reaching a particular leaf is

found by multiplying the branch probabilities found on all of the branches leading from the

root to the leaf.

- The Probability of an Event containing Multiple Leaves - The probability of an event

containing multiple leaves is found by finding the probabilities of the leaves in the desired

event, and then adding the probabilities so found. Since they are single outcomes, they are

always pairwise disjoint, so they can be added.

- Bayes Probability - Bayes probability is a method of finding Pr(B|A) in a system where it

is easy to find various forms of Pr(A|B).

Use the following procedure:

- The sample space is partitioned into n subsets S1 through Sn.

-

| Pr(S1|A) = |

Pr(A|S1) Pr(S1) |

| Pr(A|S1) Pr(S1) + Pr(A|S2) Pr(S2) + ...

+ Pr(A|Sn) Pr(Sn) |

This is easiest to do with a tree.

- Multiply out the leaf probabilities of all of the outcomes where event A happens.

These are Pr(A|Si) Pr(Si) values.

- Find the sum of the probabilities just found.

- Find the leaf with the desired event S1.

- Divide the desired leaf probability Pr(A|S1) Pr(S1) by the sum

calculated above.

Appendix

OBTAINING EQUALLY LIKELY OUTCOMES WITH A RECOMBINATION SCENARIO

A sample case: How many colors can a light panel show by changing lightbulbs?

- The light panel has 6 sockets to take lightbulbs.

- The panel has a perfect diffuser that completely mixes the colors from the 6 bulbs.

- Trading around the bulbs that are already in the sockets does not change the color of

the panel.

- There are 3 colors of bulbs available: Red, Green, and Blue, in an infinite supply.

- n = 3, r = 6.

- That is, 3 kinds of objects and 6 drawings.

- So n+r−1 = 8 and n−1 = 2.

- The number of possible outcomes is:

| R(3,6) = |

(n+r−1)! |

= |

(3+6−1)! |

= 28 |

| r! (n−1)! |

6! (3-1)! |

The actual 28 outcomes are:

| color | Outcomes |

| 1 | RRRRRR red | GGGGGG green |

BBBBBB blue |

|

|

| 2 | RRRRRG vermillion |

RRRRGG amber | RRRGGG yellow |

RRGGGG chartreuse | RGGGGG leaf |

| RRRRRB carmine | RRRRBB cerise |

RRRBBB magenta | RRBBBB purple |

RBBBBB violet |

| GGGGGB kelly green | GGGGBB aquamarine |

GGGBBB cyan | GGBBBB sky blue |

GBBBBB azure blue |

| 3 | RGBBBB light blue |

RGGBBB light azure |

RGGGBB light aqua |

RGGGGB light green |

RRGBBB lilac |

| RRGGBB white | RRGGGB light chart |

RRRGBB rose | RRRGGB peach |

RRRRGB pink |

There are 3 cases of one color, 15 cases of two colors, and 10 cases of three colors.

Each case is a unique color mixture.

Why Conventional Methods Do Not Provide Equally Likely Outcomes:

- Drawing with replacement gives 36 = 729 outcomes.

There are 3 cases of one color, 186 cases of two colors, and 540 cases of three

colors.

The extra cases are unwanted duplicate colors with the bulbs switched around in the

sockets.

- Using a tree-staged experiment with bins can't result in 28 outcomes. Multiple branches

would have the same outcome.

- Drawing without replacement either leaves out some colors, or gives duplicate outcomes.

Method 1: Prepared Cards (or balls)

The procedure is:

- Prepare one card or ball for each outcome in a table of all possible outcomes, made as

above (this example has 28).

- Choose one card to pick the entire combination.

Some advantages:

- All outcomes are equally likely.

- The cards can be shuffled to provide a nonrepeating sequence of outcomes (28 colors,

in this example).

- A bingo ball machine can be used to either pick or shuffle outcomes on balls.

Some disadvantages:

- A new set of cards must be prepared if a choice changes, if the number of choices changes,

or the number of items taken changes.

- It is easy to accidentally duplicate or leave out a card when preparing the deck.

Method 2: The gap method

The procedure

- Make a rule for the ordering of the choices (alphabetic order, numeric order, spectral

order, etc - spectral in this example).

- Prepare r choice balls (or cards) for each available choice in the n choices (6 of each

of 3 colors in this example).

In this example, we will use balls.

- Place one of each kind of choice ball in a bin (3 in this example). Keep the others in

a box for later.

There are now n balls in the bin.

- Also prepare r-1 order balls numbered 1 through r-1 (1 to 5 in the example). Use letters

if the original choices are numbers).

- Place all of the order balls in the bin.

There are now n+r−1 balls in the bin.

- Simultaneously draw r balls (6 in the example) from the bin.

There are now n−1 balls left in the bin.

- Sort any choice balls drawn according to the rule made earlier and place them in a tray

in sorted order.

- Sort any order balls drawn in order, and place them in a tray in sorted order to the

right of the choice balls.

- Note which of the order balls are missing from the rack. Each one is a gap.

The number of gaps is always one less than the number of choice balls drawn.

- Leaving the first choice ball where it is, place the other choice balls into the gaps.

Keep the choice balls in order.

Note: a gap can appear at either one or both ends of the choice levels.

- Replace each order ball with a choice ball (from the box) identical to the choice ball

immediately to its left.

The balls in the tray now contain the desired choice. All outcomes are equally likely.

Using a ball set R G B 1 2 3 4 5 with "-" as a gap.

Examples:

| Drawing | Gap Finding |

Move Choices | Final Outcome |

| G12345 | −> G12345 |

−> G12345 |

−> GGGGGG −> green |

| GB1245 | −> GB12-45 |

−> G12B45 |

−> GGGBBB −> cyan |

| RG1234 | −> RG1234- |

−> R1234G |

−> RRRRRG −> vermillion |

| RG2345 | −> RG-2345 |

−> RG2345 |

−> RGGGGG −> leaf |

| RGB123 | −> RGB123-- |

−> R123GB |

−> RRRRGB −> pink |

| RGB135 | −> RGB1-3-5 |

−> R1G3B5 |

−> RRGGBB −> white |

| RGB245 | −> RGB-2-45 |

−> RG2B45 |

−> RGGBBB −> light azure |

| RGB345 | −> RGB--345 |

−> RGB345 |

−> RGBBBB −> light blue |

| RGB145 | −> RGB1--45 |

−> R1GB45 |

−> RRGBBB −> lilac |

| RGB124 | −> RGB12-4- |

−> R12G4B |

−> RRRGGB −> peach |

Some advantages:

- All outcomes are equally likely.

- The balls (or cards) can be reused if the conditions change. All that are needed at most

are more choice and/or order balls (or cards). Keep the ones unused in the current

experiment for later use.

- A bingo ball machine can be used to pick outcomes. Let r balls out of the machine.

Some disadvantages:

- The rule for the ordering of the choices must be rigidly observed. If it is not, then the

outcomes are not equally likely.

- The procedure is tricky. The user can easily make a mistake.